- Cutting-plane

- Branch & bound

- Branch & cut

- Dynamic programming

- Constraint programming

- Constraint programming, Solving methods

- Column generation

- Branch and price

- Golden-section search

- Interpolation methods

- line search

- Nelder–Mead method

- Successive parabolic interpolation

- Trust region

- Terms and Conditions

- Berndt–Hall–Hall–Hausman

- Broyden–Fletcher–Goldfarb–Shanno and L-BFGS

- Davidon–Fletcher–Powell

- Symmetric rank-one (SR1)

- Gradient conjugate

- Gauss–Newton

- gradient

- Mirror

- Levenberg–Marquardt

- Powell's dog leg method

- Truncated Newton

- Newton's method

Contents

ToggleCombinatorial optimization

THE'combinatorial optimization, also called discrete optimization, is a branch of optimization in applied mathematics and computer science, also linked to operations research, algorithms and complexity theory. Combinatorial optimization consists of finding a subset in a set containing the “best solutions”.

Finding an optimal solution in a discrete and finite set is an easy problem in theory: it suffices to try all the solutions, and to compare their qualities to see the best one. However, the combinatorial explosion of possible solutions of certain mathematical problems does not make it possible to obtain a solution in “human” time.

Some combinatorial optimization problems can be solved (exactly) in polynomial time, for example by a algorithm dynamic programming or by showing that the problem can be formulated as a linear optimization problem in real variables. In most cases, the problem is Np-hard and, to solve it, suitable algorithms must be used.

In practice, the physically acceptable complexity is often only polynomial. We are then satisfied with having a best approximate solution, obtained by a heuristic or a meta-heuristic. It is important to note that some of these methods provide global optimals, the difference with the exact methods is the fact of not having any formal proof (mathematical or finality proof) of its global optimality.

Solution tree

That is E all the solutions to the problems. It is assumed to be discrete and finite. The enumeration of solutions is represented by tree. All elements of E are separated into not disjoint non-empty subset each containing a part of the set of solutions. For example, the set can be separated in two in the backpack problem if we take or not an element xk in the bag.

The operation can be repeated for each subset until each set contains only one element. For the example of the backpack, each subset is separated until reaching the decision on the last element.

The root of the tree is E, the wires are the sub-assemblies etc. as shown in the following diagram:

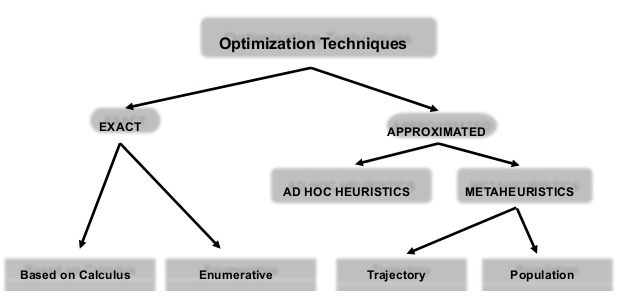

Solving techniques

Exact

These methods give a guarantee to find the optimal solution for an instance of finite size in a limited time and to prove its optimality.

Heuristic

When the complexity of a problem is exponential or exhibits a combinatorial explosion, the use of heuristics is recommended. This is a method that quickly provides a "good" solution to the problem. This approximate solution can then provide a starting point for using an exact method (such as the northwest corner for the transportation problem). All greedy and naive algorithms are heuristics.

It should be noted that heuristics are built to solve a given problem, and cannot be used in other conditions unlike meta-heuristics. The heuristic is evaluated according to three criteria:

- Quality of the result: the heuristic is confronted with the optimal results for a set of values of the given problem (we speak of benchmark). The quality of the solution can either be a distance to the optimal solution, or a probability of reaching it.

- Cost of heuristics: complexity in time and space.

- Domain of application: the admissibility domain of all the variables.

The number of heuristics for a given problem are numerous, so it is important to provide one that is fast and provides “good” results.

Meta-heuristic

Meta-heuristics have a higher level of abstraction than heuristics since they are methods that can be adapted to a given problem. Thus, the methods can be applied to various problems (in the form of a black box) without modifying their functioning. We also speak of general heuristics.

There are two kinds of meta-heuristics: population (To) or course (b). Most algorithms do not have a determined population, it is then possible to use the algorithm for a course or a population.

Both kinds work with the same four-step process:

- Neighborhood : the neighborhood of a solution is a subset of solutions reachable by a transformation of the initial solution (by permutation, by extension, by mutation, by ejection, etc.).

- Exploration : exploration consists of collecting data on the entire neighborhood.

- Operation : the exploitation uses the information collected to define the "interesting" areas of the search space formed by the neighborhood.

- Memory : the memory takes learning into account and makes it possible to determine the areas likely to have a global optimum. If the new solutions or the stopping criteria no longer make it possible to improve the solution, the algorithm stops. Otherwise, return to step 1. Some algorithms only work with stopping criteria, we then speak of metaheuristics without memory.