- Group method of data handling

- Combinatorial (COMBI)

- Multilayered Iterative (MIA)

- GN

- Objective System Analysis (OSA)

- Harmonical

- Two-level (ARIMAD)

- Multiplicative – Additive (MAA)

- Objective Computer Clusterization (OCC);

- Pointing Finger (PF) clusterization algorithm;

- Analogs Complexing (AC)

- Harmonical Rediscretization

- Algorithm on the base of Multilayered Theory of Statistical Decisions (MTSD)

- Group of Adaptive Models Evolution (GAME)

Contents

ToggleNeural algorithms

A biological neural network (where neural algorithms originate) refers to the information processing elements of the nervous system, organized as a collection of neuron cells, called neurons, which are interconnected in networks and interact with each other using electrochemical signals. A biological neuron is usually composed of an axon which provides the input signals and is connected to other neurons via synapses. The neuron reacts to input signals and can produce an output signal on its output connection called the dendrites.

The field of artificial neural networks or algorithms (ANN) is concerned with the study of theory-driven computer models and the observation of the structure and function of biological networks of neuronal cells in the brain. They are usually designed as models to solve problems math, IT and engineering. As such, there is a lot of interdisciplinary research in mathematics, neurobiology, and computer science.

An artificial neural network is usually composed of a collection of artificial neurons which are interconnected in order to perform certain calculations on input models and to create output models. They are adaptive systems capable of modifying their internal structure, usually the weights between network nodes, which allows them to be used for a variety of function approximation problems such as classification, regression, feature extraction.

There are many types of neural networks, many of which fall into one of two categories:

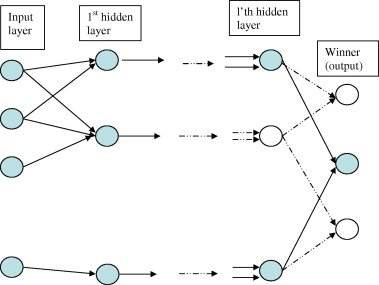

- Feedforward networks: where input is provided on one side of the network and signals are propagated forward (in one direction) through the network structure on the other side where output signals are read. These networks can consist of one cell, one layer or several layers of neurons. Some examples include the Perceptron, radial basis function networks, and multilayer perceptron networks.

- Recurring networks: where cycles in the network are allowed and the structure can be fully interconnected. Examples include network Hopfield and bidirectional associative memory.

The artificial structures of the neural network consist of nodes and weights that usually require training based on sample models of a problem domain. Here are some examples of learning strategies:

- Supervised learning: the network has a known expected response. The internal state of the network is modified to better match the expected result. Examples of this learning method include the algorithm of back propagation and Hebb's rule.

- Unsupervised learning: the network is exposed to input patterns from which it must discern meaning and extract functionality. The most common type of unsupervised learning is competitive learning where neurons compete against each other based on the input pattern to produce an output pattern. Examples include neural gas, vector quantization of learning and the self-organizing map.

Artificial neural networks or algorithms are usually difficult to set up and slow to train, but once prepared they are very fast to apply. They are typically used for problem domains based on function approximation and appreciated for their generalization and noise tolerance capabilities. They are known to be a black box, which means that it is difficult to explain the decisions made by the network.