Contents

ToggleVector quantization learning algorithm

Vector quantization learning is linked to the self-organizing map which in turn is inspired by the self-organizing abilities of neurons in the visual cortex.

The information processing objective of the vector quantization learning algorithm is to prepare a set of codebook (or prototype) vectors in the domain of the observed input data samples and to use these vectors to classify untreated examples. An initially random vector pool is prepared which is then exposed to training samples.

A winner takes all strategy is used where one or more of the vectors most similar to a given input pattern are selected and adjusted to be closer to the input vector, and in some cases further from the winner for the finalists. . Repeating this process results in the distribution of codebook vectors in the input space that approximate the underlying distribution of the samples in the test dataset.

The vector quantization learning algorithm is a signal processing technique where density functions are approximated with prototype vectors for applications such as compression. Learning vector quantization is similar in principle, although prototype vectors are learned by a supervised winner-take-all method.

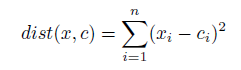

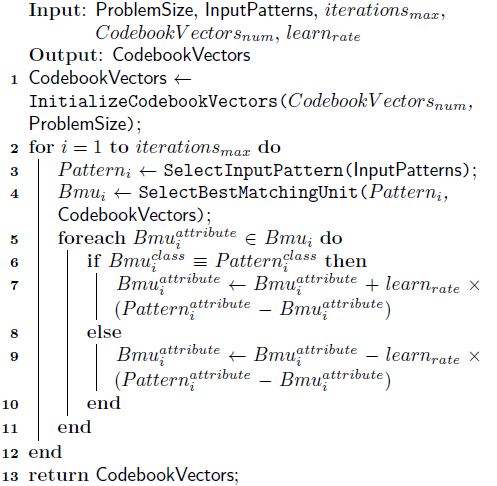

The following algorithm provides a pseudocode to prepare vector codebooks using the vector quantization learning method. Codebook vectors are initialized to small floating-point values or sampled from an available dataset. The best match unit (BMU) is the pool's codebooks vector that has the minimum distance to an input vector. A measure of distance between input patterns must be defined. For real-valued vectors, this is usually the Euclidean distance:

where n is the number of attributes, x is the input vector, and c is a given codebooks vector.

Quantization of learning vectors was designed for classification problems that contain existing data sets that can be used to supervise learning by the system. The algorithm does not support problems of regression. LVQ is nonparametric, which means that it does not rely on assumptions about the structure of the function it is approximating. The real values in the input vectors must be normalized such that x is in [0; 1].

Euclidean distance is commonly used to measure the distance between real-valued vectors, although other distance measures can be used (such as the dot product), and data-specific distance measures may be required for non-attributes. scalars. There must be sufficient training iterations to expose all training data to the model multiple times. The learning rate is typically linearly decreasing during the learning period starting from an initial value close to zero. The more complex the class distribution, the more codebook vectors will be needed, some problems may require thousands.

Multiple passes of the LVQ training algorithm are suggested for more robust use, where the first pass has a high learning rate to prepare codebook vectors and the second pass has a low learning rate and runs for a long time. long period (maybe 10 times more iterations).