Contents

ToggleAnt colony

Ant colony-based algorithms solve static problems and provide a high degree of flexibility and robustness in dynamic environments. The colony adapts to sudden changes in the environment and continues to function when certain individuals fail to complete their task.

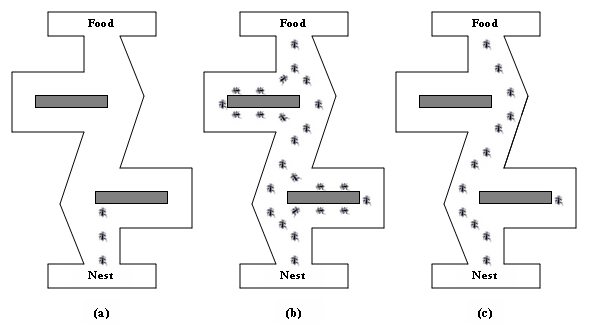

The principle of ant colony is simple. Ants are able to select the shortest way to get from the nest to a food source by depositing and following pheromone trails. These are deposited by ants on their way. An ant tends to follow the trail with the highest pheromone impact. Pheromones dissipate over time (not in all versions of the algorithm). We therefore conclude that after a time, the ants will all choose the shortest path between the nest and the food.

Ant colony optimization

The algorithm was introduced by Mr. Dorigo in 1991, initially intended for the TSP (travelling salesman). We represent the problem to be solved in the form of the search for a shortest path in a graph.

The structure of the ant colony algorithm is as follows:

- Initialize

- Repeat up to max cycles

- Every ant builds a solution

- Improving solutions by local search

- Reward the best solutions by adding pheromone

- Evaporate traces of pheromone

ACO type algorithms have proofs of convergence that will not be shown in this course.

Initialization (case of TSP)

The m ants are distributed randomly on the not cities. For each ant, a taboo list assigned to him, it contains his start list. Pheromone trails are initialized to a value vs positive not zero. Each edge of the graph ij has its pheromone track noted Tij= c.

Construction of the solution and local search

An ants k placed on a city i just now t chooses his destination city j in terms of :

- The visibility of this city notij (distance in the case of TSP)

- The amount of pheromone Tij(t) deposited on the runway.

The choice is random according to a probability where two parameters To and b control the relative importance of visibility and the amount of pheromones. Yes b = 0, the nearest cities are more likely to be selected, this is a algorithm gluttonous. Whether a = 0, only the amplification of the pheromones acts, there is premature convergence and non-optimal results. The new city is added to the taboo list of the ants.

Each ants proceeds in this way until they go through all the towns.

End of a cycle

For each ants k, we calculate the length of his turn THEk(t) then we empty its taboo-list (except initialization).

Then the pheromone tracks are updated:

Tij(t + 1) = p * Tij(t) + pheromonesij(t) with p between 0 and 1 and pheromonesij(t) the amount of pheromone deposited by the ants on the ridge ij to cycle t.

Evaporation from the track is calculated directly in the previous formula. Indeed p represents the persistence of the track, that is to say the memory of the quantity of pheromones which one keeps for the following cycle. Yes p = 1, there is no evaporation therefore no limitation of the autocatalytic phenomenon. Yes p = 0, the system has no memory, only the last cycle performed is taken into account.

For each ants k passing through the ridge ij, it increases the quantity of pheromone deposited according to the following calculation:

pheromonesij(t) + = Q / Lk(t) or Q represents a quota of pheromones allocated to each ants (often Q = 100). The idea is to supply all the way traveled by an ants homogeneously with pheromones.

The smallest lap is kept in memory if it is better than the last one to memorize. The ants start a cycle again from their initial city (kept in their taboo list).

Algorithm variants: MMAS

The Max-min ant system (MMAS) is an efficient variant of the algorithm. Only the best ants trace trails where the deposition of pheromones is limited by an upper bound and a lower bound. Thus we prevent a track from being too reinforced and we leave the possibility of exploring any track. In particular, this prevents premature convergence.