Here is a pipeline explaining data mining, transformation for normalization and regression (with performance analysis).

Contents

ToggleAnalysis, transformation and regression

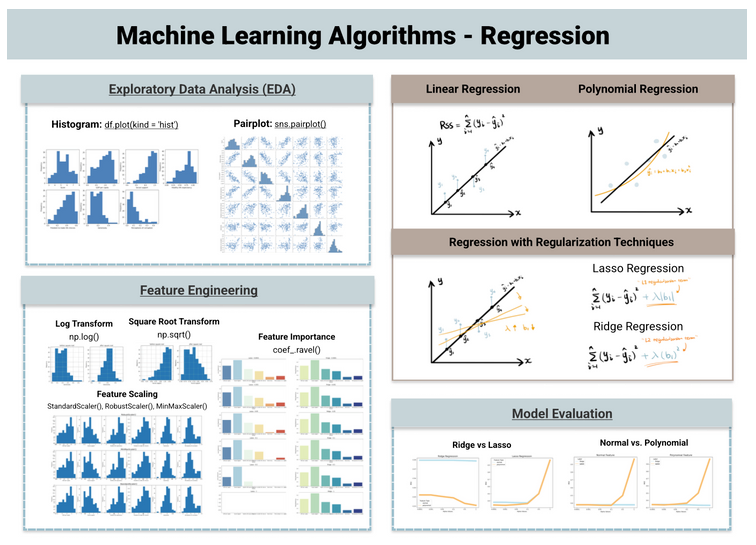

Now let's dive into the other category of supervised learning – regression where the output variable is continuous and numerical. There are four common types of regression models: linear, lasso, ridge regression, polynomial.

Exploration and preprocessing

This project aims to use regression models to predict countries' happiness scores based on other factors "GDP per capita", "Social support", Healthy life expectancy", "Freedom to make life choices “Generosity” and “Perceptions of Corruption”.

I used the “World Happiness Report” dataset on Kaggle, which has 156 entries and 9 features.

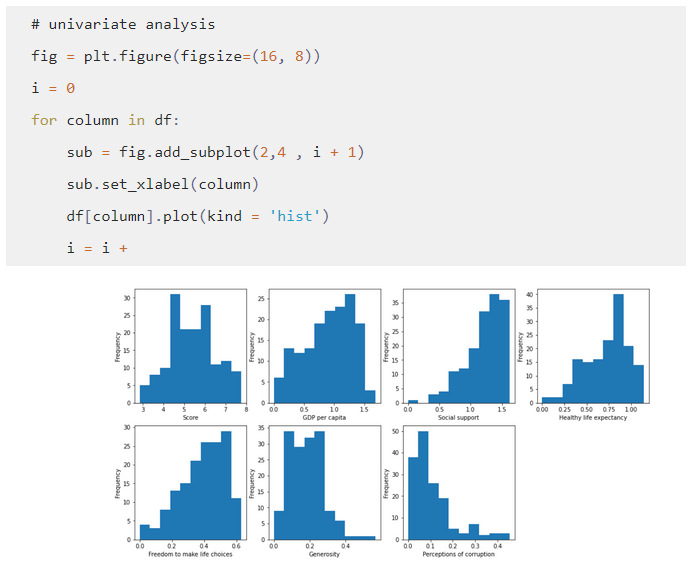

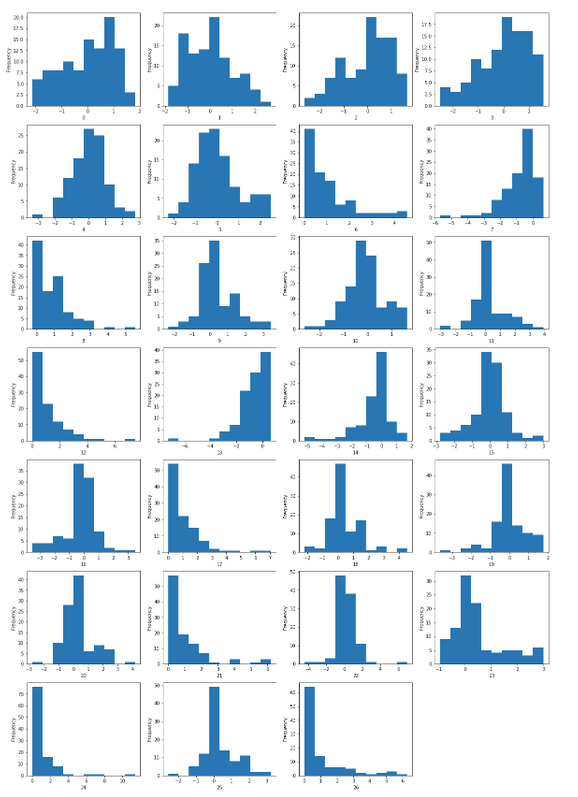

Apply the histogram to understand the distribution of each feature. As shown below, “social support” appears to be heavily left-skewed while “generosity” and “corruption perceptions” are right-skewed – which informs feature engineering techniques for transformation.

We can also combine the histogram with the skewness measure below to quantify whether the feature is heavily left or right skewed.

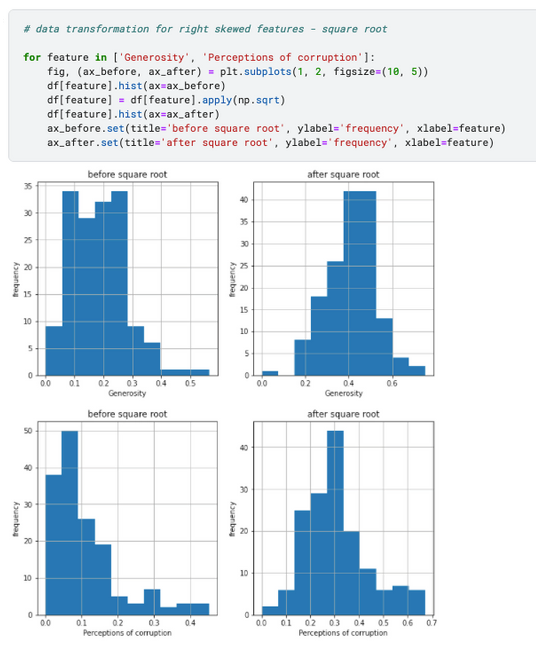

np.sqrt is applied to transform right-skewed characteristics – “Generosity” and “Perceptions of Corruption”. As a result, both features become more normally distributed.

np.log(2-df['Social support']) is applied to transform the left-skewed function. And the asymmetry decreases considerably from 1.13 to 0.39.

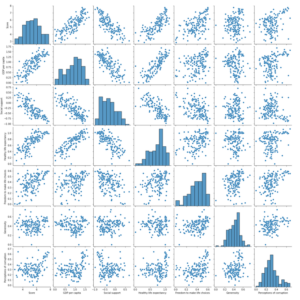

sns.pairplot(df) can be used to visualize the correlation between the entities after the transformation. The scatterplots suggest that “GDP per capita”, “Social support”, “Healthy life expectancy” are correlated with the target characteristic “Score”, and therefore may have higher coefficient values. Let's find out if this is the case in the later section.

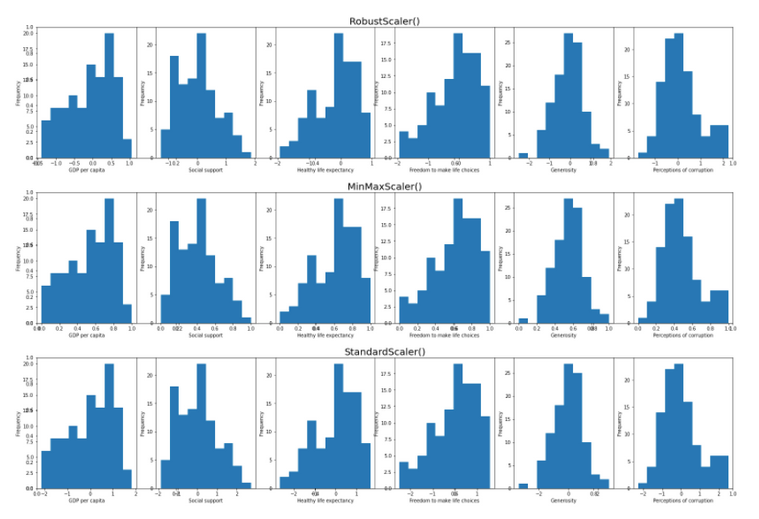

Since the regularization techniques manipulate the value of the coefficients, this makes the performance of the model sensitive to the feature scale. Therefore, the features should be transformed to the same scale. I experimented on three scales – StandardScaler, MinMaxScaler and RobustScaler.

As you can see, the scales will not affect the distribution and shape of the data, but will change the range of the data.

Regressions and comparisons

Now let's compare three linear regression models below – linear, ridge, and lasso.

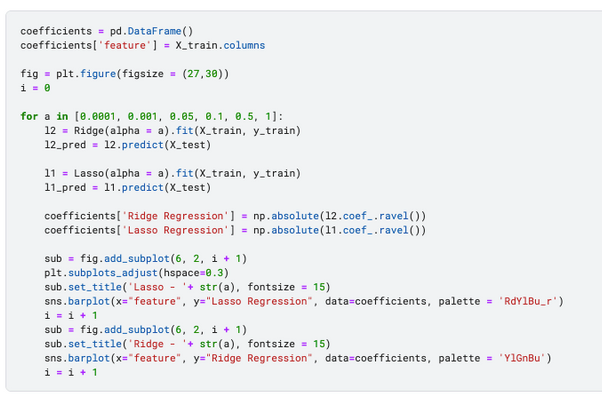

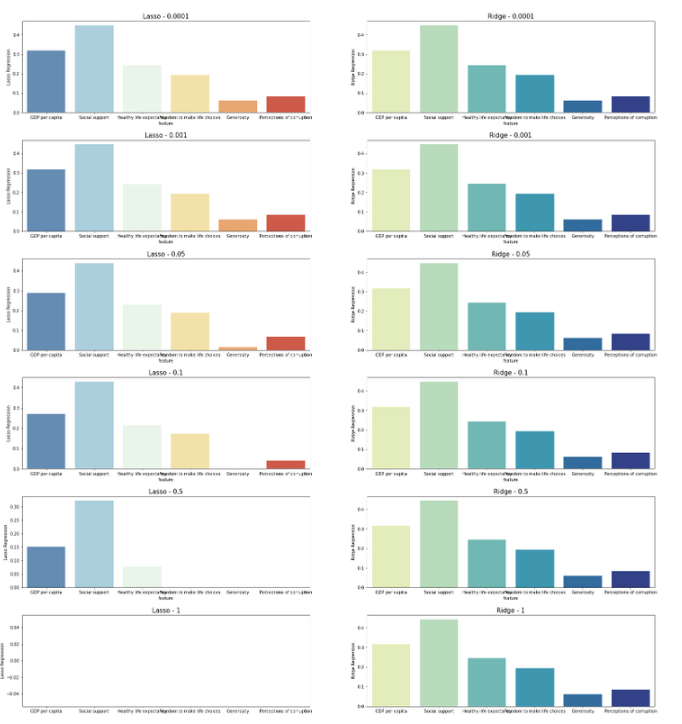

The next step is to experiment with how different lambda (alpha in scikit-learn) values affect models. More importantly, how feature importance and coefficient values change as the alpha value changes from 0.0001 to 1.

Based on the coefficient values generated from the Lasso and Ridge models, 'GDP per capita', 'Social support', 'Healthy life expectancy' appeared to be the 3 most important characteristics. This aligns with previous scatterplot results, suggesting that they are the main drivers of the Country Happy Score.

The side by side comparison also indicates that increasing alpha values impact Lasso and Ridge at different levels, Lasso features are more heavily removed. This is why Lasso is often chosen for feature selection.

Additionally, polynomial features are introduced to improve the basic linear regression, increasing the number of features from 6 to 27.

# apply polynomial effects

from sklearn.pre-processing import PolynomialFeatures pf = Polynomial Features(degree = 2, include_bias = False)

X_train_poly = pf.fit_transform(X_train)

X_test_poly = pf.fit_transform(X_test)Look at their distribution after the polynomial transformation.

Model evaluation

Last step, evaluate and compare the performance of the Lasso vs Peak model, before and after polynomial effect. In the code below, I created four models:

- l2: crest without polynomial features

- l2_poly: crest with polynomial features

- l1: lasso without polynomial features

- l1_poly: lasso with polynomial features

Commonly used regression model evaluation metrics are MAE, MSE, RMSE, and R-squared – see my article on “A Practical Guide to Linear Regression” for a detailed explanation. Here I used MSE (mean squared error) to evaluate the performance of the model.

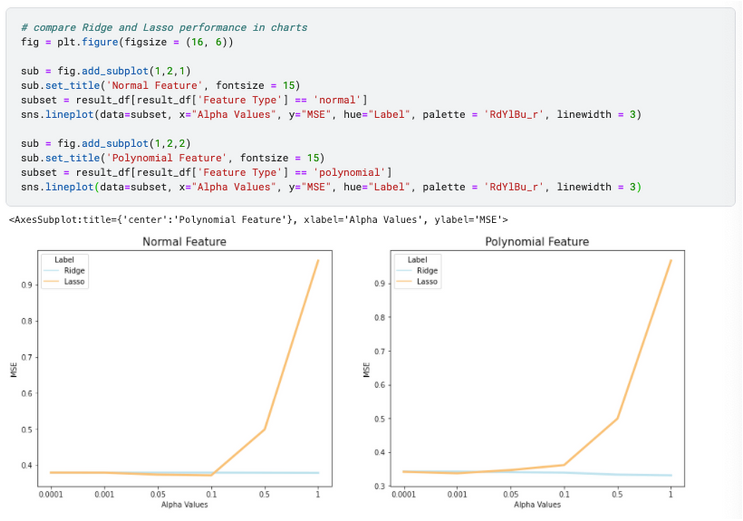

1) Comparing Ridge and Lasso in a graph indicates that they have similar accuracy when alpha values are low, but Lasso deteriorates significantly when alpha is closer to 1.

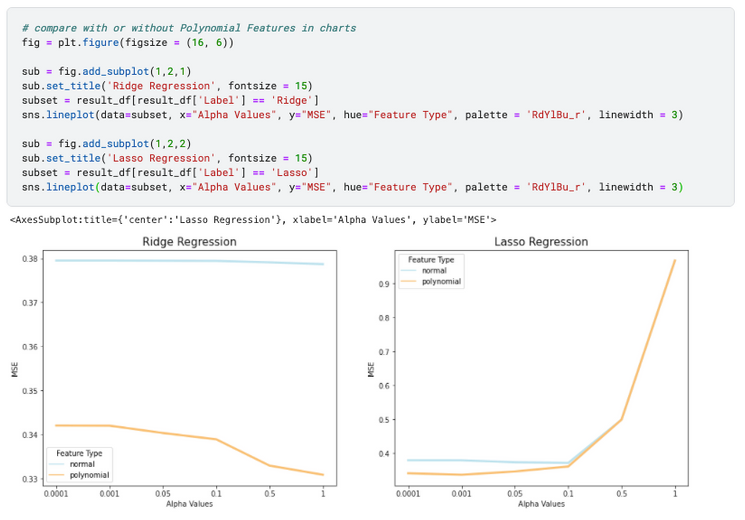

2) By comparing with or without polynomial effect in a graph, we can say that polynomial decreases MSE in general – hence improves model performance. This effect is more significant in Ridge regression when alpha increases to 1, and more significant in Lasso regression when alpha is closer to 0.0001.

However, even though the polynomial transformation improves the performance of regression models, it makes the interpretability of the model more difficult – it is difficult to distinguish the main drivers of the model from a polynomial regression. Less error doesn't always guarantee a better model, and it's all about finding the right balance between predictability and interpretability based on the goals of the project.