Contents

TogglePost-optimal sensitivity analysis

When the basic fix optimal of the PL problem is analyzed to answer questions about changes in its formulation, the study is called post-optimal sensitivity analysis.

We call post-optimization all the techniques making it possible to obtain the optimum of the PL problem when certain data have undergone modifications.

We consider the problem of linear programming general in its stand art form:

This study can be motivated by several reasons:

- the data of the problem is not known with exactitude, in which case it is important to determine to what extent this affects the proposed solution;

- we want to assess the consequences of a policy change that would modify the facts of the problem.

Marginal costs

If the problem posed consists in transforming goods to sell a production with a better profit and the maximum increase in income which results from the possibility of having an additional unit of one of the goods, is the marginal value of this good . Very often, the qualifier marginal cost is also used in this case.

If a difference variable is not zero, in the optimal solution, it means that the corresponding good is already surplus. Therefore, having an additional unit of this good will have no influence on the income. The slack variable has zero marginal value.

On the other hand, if a variance variable is zero in the optimal solution, it is because the corresponding good is totally used. Subsequently, a variation in availability will generally have an influence on income. This is why this zero deviation variable in the optimal solution has a non-zero marginal value, and this marginal value specifies the variation of the economic function resulting from the use of an additional unit of the associated good.

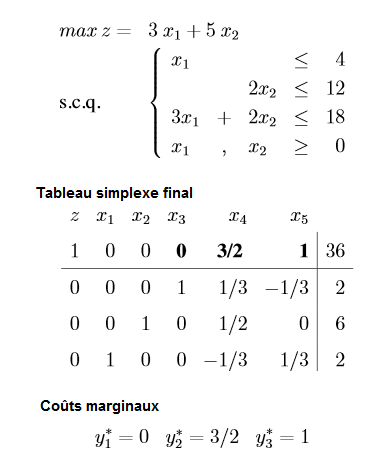

with the solution vector x * = (2,6). Attention here the line shows the value of Z and not of -Z (hence the positive values).

We can measure the sensitivity of the optimal solution to a change in a line term or a coefficient in the objective.

Study 1: variation in objective function

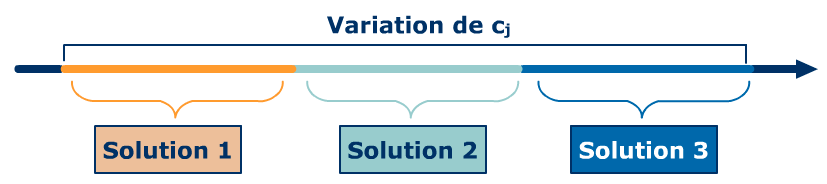

The variation of a coefficient in the objective function over a certain interval does not lead to a modification of the optimal solution. Outside this interval, we have a new solution which itself remains optimal over another interval. We can thus highlight a finite number of variation intervals for cj, with on each of them an invariant solution.

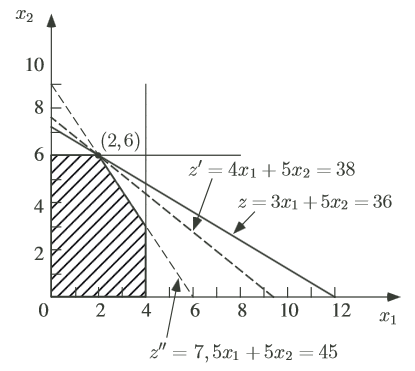

Let us change the objective function by max z '= 4 * x1 + 5 * x2. The value of the objective function will change by x *1 = 2, while the solution vector will not be modified as shown in graphics resolution :

Likewise if c1 changes from 3 to 2, only the value of the objective function will be modified. To calculate the interval over which the coefficient x *1 is valid, we need to evolve the objective function until it is parallel to the other constraints.

That is to say when the slope of the objective function is equal to the slope of the saturated stresses for the solution vector s *:

- z = c1* x1 + 5 * x2 and 2 * x2 = 12 so -c1/ 5 = 0, c1 = 0;

- z = c1* x1 + 5 * x2 and 3 * x1 + 2 * x2 = 18 so -c1/ 5 = -3/2, c1 =15/2.

The coefficient x *1 is therefore valid for c1 between 0 and 15/2.

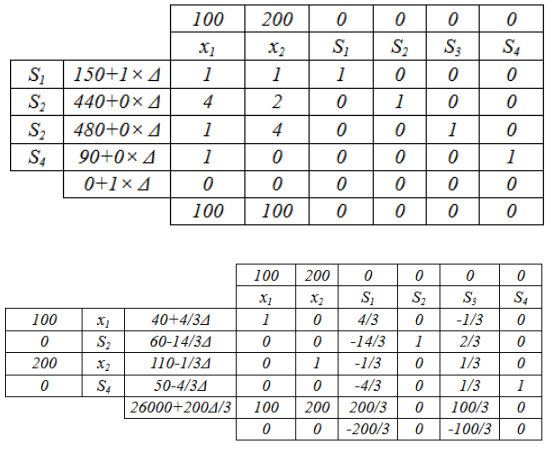

When the problem is of large dimension, it is possible to calculate the variation of the cost using the simplex by adding a delta on the cost to vary as in the following example:

The solution remains optimal as long as the line of -Z has negative numbers so if:

Study 2: variation in the second limb

When the second member of a constraint varies (within a certain interval), if this constraint was not saturated, then the solution does not change and neither does the optimal value of the objective function. This result is obvious since the optimal solution not satisfying the constraint with equality, one can vary (a little) the second member without “touching” the optimal solution.

On the other hand, if the constraint were checked with equality at the optimum, one has an interval of variation for the second member such as:

- The solution changes but the zero variables remain zero and the non-zero variables remain non-zero: the structure of the solution does not change.

- The variation of the second member i causes a variation of the optimal value of the objective function equal to ui* di, therefore proportional to di.

If we leave the interval, we have a new dual cost. We can thus highlight a finite number of variation intervals for the second member with, on each of them, a value for the dual cost. On the different intervals, the sensitivity analysis does not give the optimal solution since the numerical values of the variables depend on the exact value of the second member.

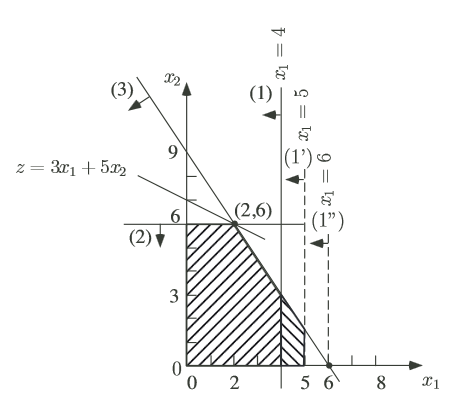

Consider in the example that b1 = 4 becomes b '1 = 5. Let's perform a graphic resolution of the new problem:

When is the decrease in b1 ? As the explanation on marginal costs explains, decreasing 1 of the second member causes the value of the objective function to decrease by an amount equal to the marginal cost. So decreasing 1 will not result in a change.

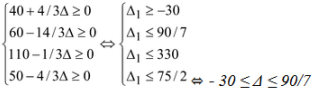

To know the possibilities of evolution of the stock without changing the value of the optimal solution, it is necessary to add a delta in the second member studied as shown in the following example:

The solution remains optimal as long as the simplex is not degenerated, i.e. the second member is positive:

Consider now an increase of the third second member to b '3 = 19. Since the marginal cost is not zero, the optimal solution will be modified as shown by the graphical resolution.

Study 3: variation of non-base variables

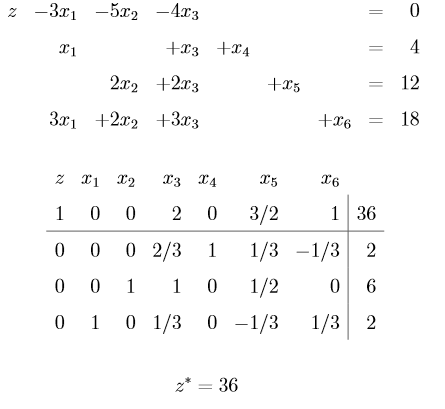

Let's go back to the previous example with a new constraint and a new variable:

We have the following reduced cost: d3 = -2. This means that to build a unit of x3 would decrease the value of the objective function by 2 (since it is outside the base x *3 = 0).

Study 4: variation in production

Indeed by adding a delta variable in the cost of the variable based on the target constraint then it suffices to inject the optimal vector and solve the equation as in the following example: